技術路線:requests-bs4-re

首先打開名人名言的網站https://mingyan.supfree.net/search.asp

python做什么的,然后查看源代碼,可以看到,名人名言都存儲在table標簽內,可以利用bs4庫對其進行查找標簽

即soup1 = soup.find('table') 找到table標簽,然后再table標簽里再尋找a標簽,stockInfo = soup1.find_all('a'),此是的stockinfo變量是class 'bs4.element.Tag類型的,所以需要變換成str類型才可以用正則表達式re庫進行精確查找,str1 = str(stockInfo)(這里涉及到將bs4.element.Tag轉換成string,可以參考https://www.jianshu.com/p/d67a3858728c)

這里可以觀察到下一頁的url是,可以用requests庫參數設置,對url進行修改就可以用for循環實現翻頁功能,具體參數設置參考下圖,這里我只爬取第一個頁面,即用:

for i in range(1, 2):kv = {'page': i}r = requests.get('https://mingyan.supfree.net/search.asp', params=kv)

3. 第三步

最后用正則表達式re庫對其進行精確查找

contents = re.findall(r'<a href="honda\.asp\?id=\d+" target="_blank">(.*?)</a>', str1)

authors = re.findall(r'<a href="toyota\.asp\?id=[\u4e00-\u9fa5]+" target="_blank">(.*?)</a>', str1)

用python寫網絡爬蟲、完整代碼

import requests

import re

from bs4 import BeautifulSoup# 利用bs4和re庫獲取html中我們想要的文本信息

for i in range(1, 2):kv = {'page': i}r = requests.get('https://mingyan.supfree.net/search.asp', params=kv)r.raise_for_status()r.encoding = r.apparent_encodinghtml = r.textsoup = BeautifulSoup(html, 'html.parser')soup1 = soup.find('table')stockInfo = soup1.find_all('a')str1 = str(stockInfo)contents = re.findall(r'<a href="honda\.asp\?id=\d+" target="_blank">(.*?)</a>', str1)authors = re.findall(r'<a href="toyota\.asp\?id=[\u4e00-\u9fa5]+" target="_blank">(.*?)</a>', str1)print(contents)print(authors)

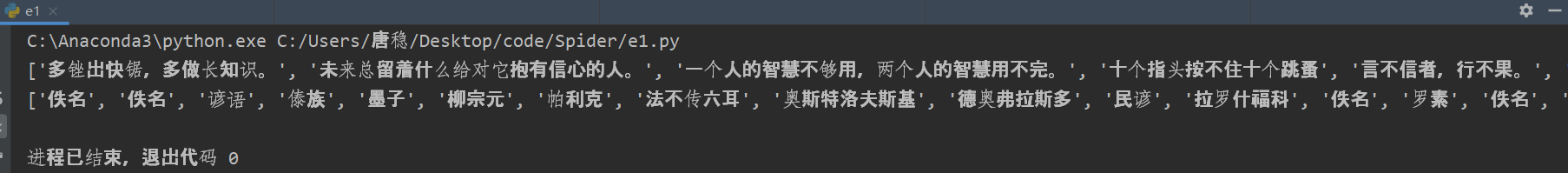

運行效果如下

方法二:直接用re庫查找文本內容

import requests

import re

# from bs4 import BeautifulSoupfor i in range(1, 2):kv = {'page': i}r = requests.get('https://mingyan.supfree.net/search.asp', params=kv)r.raise_for_status()r.encoding = r.apparent_encodinghtml = r.textcontents = re.findall(r'<a target="_blank" href="honda\.asp\?id=\d+">(.*?)</a>', html)authors = re.findall(r'<a target="_blank" href="toyota\.asp\?id=[\u4e00-\u9fa5]+">(.*?)</a>', html)

python爬蟲教程?后續

之前的代碼有點問題就是沒有識別出中文的標點符號 。 ; , : “ ”( ) 、 ? 《 》 需要改進re正則表達式,匹配中文標點符號: [\u3002\uff1b\uff0c\uff1a\u201c\u201d\uff08\uff09\u3001\uff1f\u300a\u300b]

匹配中文漢字字符的正則表達式: [\u4e00-\u9fa5]

即使用對中文漢字字符和標點符號進行匹配[\u3002\uff1b\uff0c\uff1a\u201c\u201d\uff08\uff09\u3001\uff1f\u300a\u300b\u4e00-\u9fa5]+

import requests

import re

from bs4 import BeautifulSoup# 利用bs4和re庫獲取html中我們想要的文本信息

for i in range(1, 2):kv = {'page': i}r = requests.get('https://mingyan.supfree.net/search.asp', params=kv)r.raise_for_status()r.encoding = r.apparent_encodinghtml = r.textsoup = BeautifulSoup(html, 'html.parser')soup1 = soup.find('table')stockInfo = soup1.find_all('a')str1 = str(stockInfo)contents = re.findall(r'<a href="honda\.asp\?id=\d+" target="_blank">(.*?|[\u3002\uff1b\uff0c\uff1a\u201c\u201d\uff08\uff09\u3001\uff1f\u300a\u300b].*?)</a>', str1)authors = re.findall(r'<a href="toyota\.asp\?id=[\u3002\uff1b\uff0c\uff1a\u201c\u201d\uff08\uff09\u3001\uff1f\u300a\u300b\u4e00-\u9fa5]+" target="_blank">([\u3002\uff1b\uff0c\uff1a\u201c\u201d\uff08\uff09\u3001\uff1f\u300a\u300b\u4e00-\u9fa5]+)</a>', str1)lists = []for i in range(len(contents)):lists.append({'名言content':contents[i], '名人author':authors[i]})print(lists)print(len(lists))

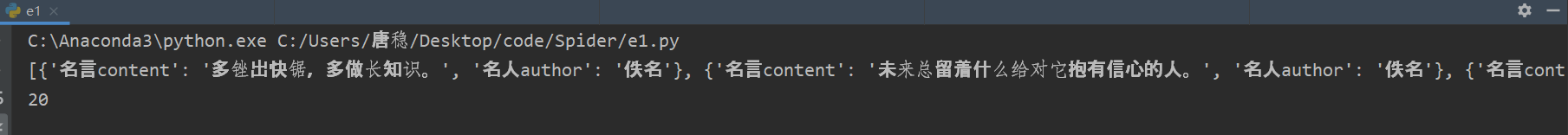

效果如下

又發現了一點小bug,修改了代碼的正則表達式部分,采用了函數式模塊編程,增加保存數據的功能,保存數據為json文件格式。

import requests

import re

from bs4 import BeautifulSoup

import json

import timelists = []

# 利用bs4和re庫獲取html中我們想要的文本信息

def gethtml(url, kv):r = requests.get(url, params=kv)r.raise_for_status()r.encoding = r.apparent_encodingreturn r.textdef re_html(html):soup = BeautifulSoup(html, 'html.parser')soup1 = soup.find('table')stockInfo = soup1.find_all('a')str1 = str(stockInfo)contents = re.findall(r'<a href="honda\.asp\?id=\d+" target="_blank">(.*?|[+\\u3002\uff1b\uff0c\uff1a\u201c\u201d\uff08\uff09\u3001\uff1f\u300a\u300b]+.*?)</a>',str1)authors = re.findall(r'<a href="toyota\.asp\?id=[+\\u3002\uff1b\uff0c\uff1a\u201c\u201d\uff08\uff09\u3001\uff1f\u300a\+\u300b\u4e00-\u9fa5_a-zA-Z0-9]+.*?" target="_blank">([\u3002\uff1b\uff0c\uff1a\+\u201c\u201d\uff08\uff09\u3001\uff1f\u300a\u300b\u4e00-\u9fa5_a-zA-Z0-9]+.*?)</a>',str1)print(contents)print(authors)print(len(contents))print(len(authors))for i in range(len(contents)):lists.append({'名言content':contents[i], '名人author':authors[i]})return listsdef save_json(lists):with open('a.json', 'w+', encoding='utf-8') as f:json.dump(lists, f, ensure_ascii=False, indent=0)def main():a,b = eval(input('請輸入想要爬取的頁碼范圍(例如輸入1,10表示1到10頁):'))start = time.perf_counter()for i in range(a, b+1):kv = {'page': i}url = 'https://mingyan.supfree.net/search.asp'html = gethtml(url, kv)lists = re_html(html)save_json(lists)end = time.perf_counter()print(end-start)

main()python編程?又產生了一點小BUG,不能識別【】-,在正則表達式中添加相應的【】-的正則表達式代碼,同時增加計時功能,計算爬取的時間

import requests

import re

from bs4 import BeautifulSoup

import json

import timelists = []

# 利用bs4和re庫獲取html中我們想要的文本信息

def gethtml(url, kv):r = requests.get(url, params=kv)r.raise_for_status()r.encoding = r.apparent_encodingreturn r.textdef re_html(html):soup = BeautifulSoup(html, 'html.parser')soup1 = soup.find('table')stockInfo = soup1.find_all('a')str1 = str(stockInfo)contents = re.findall(r'<a href="honda\.asp\?id=\d+" target="_blank">(.*?|[+\\u3002\uff1b\uff0c\uff1a\u201c\u201d\uff08\uff09\u3001\uff1f\u300a\u300b]+.*?)</a>',str1)authors = re.findall(r'<a href="toyota\.asp\?id=[\u3010\u3011+\\u3002\uff1b\uff0c\uff1a\u201c\u201d\uff08\uff09\u3001\uff1f\u300a\+\u300b\u4e00-\u9fa5\-_a-zA-Z0-9]+.*?" target="_blank">([\u3010\u3011\u3002\uff1b\uff0c\uff1a\+\u201c\u201d\uff08\uff09\u3001\uff1f\u300a\u300b\u4e00-\u9fa5\-_a-zA-Z0-9]+.*?)</a>',str1)print(contents)print(authors)print(len(contents))print(len(authors))for i in range(len(contents)):lists.append({'名言content':contents[i], '名人author':authors[i]})return listsdef save_json(lists):with open('a.json', 'w+', encoding='utf-8') as f:json.dump(lists, f, ensure_ascii=False, indent=0)def main():a,b = eval(input('請輸入想要爬取的頁碼范圍(例如輸入1,10表示1到10頁):'))start = time.perf_counter()for i in range(a, b+1):kv = {'page': i}print(i)url = 'https://mingyan.supfree.net/search.asp'html = gethtml(url, kv)lists = re_html(html)save_json(lists)end = time.perf_counter()print(end-start)

main()總結

中文字符的爬取感覺總是又點小問題,但是不妨礙我們對原理上面的了解

后續:

https://blog.csdn.net/Verilogerr/article/details/106203709

版权声明:本站所有资料均为网友推荐收集整理而来,仅供学习和研究交流使用。

工作时间:8:00-18:00

客服电话

电子邮件

admin@qq.com

扫码二维码

获取最新动态